Your Daily Best of AI™ News

🚨Nordic startup Lovable hit $200M revenue just 12 months after launch—a pace that suggests European founders are finally taking billion-dollar swings instead of playing it safe. The vibe coding platform now has 2.3M users building apps without writing code.

The Big Idea

The only 7 moats that matter for AI companies.

AI infrastructure is a knife fight. Foundation models are racing to the bottom on price. Developer tools are getting commoditized.

If you're building an AI company, infrastructure isn't your moat. Here are the only seven that matter:

Moat 0: Speed (Most Important Early On)

Cursor ships features in 1-day sprints. Big companies need weeks or months. Speed is your only early advantage.

When everyone has access to the same models, the same APIs, and the same talent pool, the only differentiation is how fast you can ship, iterate, and respond to user feedback.

Speed isn't a permanent moat. But it's the only moat that buys you time to build the others.

Moat 1: Process Power

The last 10% to reach 99% reliability takes 10-100x the effort of a hackathon demo.

Examples: Casetext (legal AI), Greenlight (KYC for banks), Plaid (thousands of financial integrations).

Why it works: Competitors won't do the painstaking edge case work. They'll ship the demo, declare victory, and move on. You'll own the market by being the only one who actually works in production.

Moat 2: Cornered Resource

Hard-to-acquire assets: regulatory approvals, unique data access, customer relationships.

Examples: Scale AI and Palantir (DoD contracts), forward-deployed engineers mapping customer workflows, proprietary datasets from enterprise customers.

AI companies that own unique data or exclusive access to regulated industries have moats that can't be replicated by better models or cheaper APIs.

Moat 3: Switching Costs

Two types in AI:

Traditional: Data migration pain (AI actually reduces this).

New: Deep customization. 6-12 month pilots building custom workflows that integrate into operations (Happy Robot, Salient).

Consumer: ChatGPT memory makes switching feel like starting over. You've trained the AI on your preferences, your context, your workflows. Switching to a competitor means rebuilding that from scratch.

Moat 4: Counter-Positioning

Doing what incumbents can't without cannibalizing themselves.

Pricing: SaaS charges per-seat. AI success = fewer seats = less revenue. Startups charge per-task or per-outcome.

Second mover advantage: Lagora vs Harvey (application layer vs fine-tuning).

The OpenAI case: Google couldn't disrupt search ads. OpenAI had no business to protect.

If your AI product makes the incumbent's business model obsolete, they can't compete without destroying their own revenue. That's a moat.

Moat 5: Brand

ChatGPT has more daily users than Google Gemini despite equivalent models and Google's billion+ user base.

Why: Speed + no legacy business model to protect.

Brand in AI isn't about marketing. It's about trust. Users trust ChatGPT because it was first, it was fast, and it delivered results. That trust compounds.

Moat 6: Network Effects

More users → more data → better models → better product → more users.

Examples:

- Cursor (all keystrokes train autocomplete)

- ChatGPT (conversations train GPT-6)

- SuperX.so (more users = better AI content)

- Ourank.so (more users = more people to get domain reputation up)

Network effects in AI are data feedback loops. Every user interaction makes the product better for everyone else. That's a compounding moat.

Moat 7: Scale Economies

Massive upfront investment creates cost advantages.

Examples: Foundation model training, web crawling (Exa, Channel 3), infrastructure plays.

Note: Less relevant at the application layer for most startups. But if you're building infrastructure, scale is the only moat that matters.

What this means

If you're starting an AI company, pick one or two of these moats and build everything around them. Infrastructure alone won't save you.

If you're evaluating AI companies, look for which moats they're building. The ones with none will get commoditized. The ones with multiple will compound.

If you're competing in AI, don't compete on model quality. Compete on the moats the incumbents can't replicate.

What's next

Expect AI companies to layer moats. The winners won't have one moat. They'll have three or four that reinforce each other.

Speed buys you time to build process power. Process power earns you customer trust. Trust creates switching costs. Switching costs generate network effects.

The AI companies that survive the infrastructure race will be the ones that stacked moats while everyone else was optimizing latency.

BTW: The best AI companies don't talk about their models. They talk about their workflows, their customers, and their data. That's how you know they understand moats.

Today’s Top Story

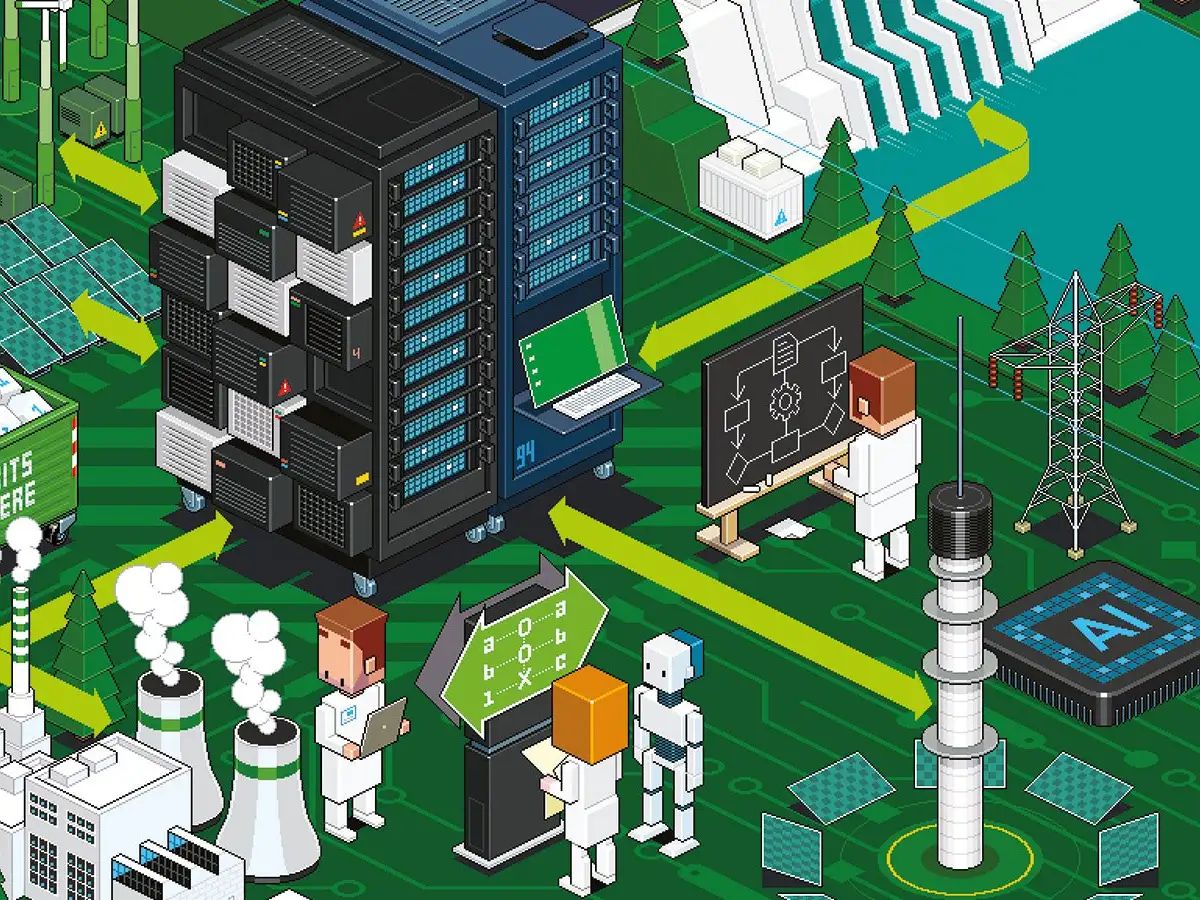

AI's energy problem just got a number

The Recap: xAI's Memphis data center will add a solar farm producing only 10% of its power needs—a concrete number that exposes the massive energy gap AI infrastructure faces. The facility currently uses 150 megawatts (enough to power 100,000+ homes), with Musk planning to double capacity by year-end.

Unpacked:

The 88-acre solar farm represents a token gesture when the data center powers Grok and plans to expand to 300+ megawatts of consumption.

Tennessee Valley Authority approved the initial 150MW supply, but the solar component won't meaningfully offset the facility's grid dependence.

This 10% figure reveals what the industry won't say: AI data centers can't run on renewables alone, despite public commitments to sustainability.

Bottom line: The solar farm math doesn't work, and xAI just proved it with specifics. When a company building cutting-edge AI infrastructure can only offset 10% of power needs with dedicated solar, it signals that the entire industry's energy problem is far larger than marketing narratives suggest. The gap between AI's power demands and renewable capacity isn't closing—it's widening.

Other News

49 US AI startups raised $100M+ in 2025—a metric revealing capital concentration among perceived winners as mega-rounds exceed 75% of all AI funding.

OpenAI claims teen 'misused' ChatGPT before suicide, setting legal precedent for AI liability vs user responsibility as company faces multiple similar lawsuits.

Redwood Materials cuts 5% of staff despite $350M raise—revealing even well-funded battery recycling plays face execution pressure in scaling operations.

GM's software executive exodus signals automakers still can't merge legacy operations with tech-first culture as leadership turnover continues.

TSMC sues Intel's new exec for trade secrets—escalating the semiconductor talent war into legal battleground as chip competition intensifies.

UK's Online Safety Act drives VPN adoption, revealing how regulatory overreach accelerates the very circumvention it aims to prevent.

AI shopping assistants recommend outdated products—exposing training data lag as a fundamental flaw in real-time commerce applications.

Apple projected to overtake Samsung in 2025 phone sales—marking a strategic shift in how premium pricing beats volume plays.

AI Around The Web

Test Your AI Eye

Prompt Of The Day

Copy and paste this prompt 👇

"Create a Facebook ad that incorporates [humor] to engage and entertain my [target audience] and make my [brand/service/product] more approachable and relatable."Best of AI™ Team

Was this email forwarded to you? Sign up here.